A joint work of Chinese and American information security professionals found a new method to attack smart personal assistants like Amazon Alexa and Google Home, whom they called “squatting voice”.

The experts detailed the technique in a recently published research paper, along with another method of attack called voice masking.

Malicious actors try to trick users into opening an infected application using voice triggers similar to those of real applications; they also use these infectious applications to obtain confidential data or to spy around them.

The first attack is called “squatting voice”, it is based on the similarities between voice commands that trigger specific actions, say information security experts.

The team of researchers found that they could register voice assistant applications, called “skills” by Amazon and “shares” by Google, which activate very similar phrases.

The researchers commented that an attacker could, for example, register an application that is triggered by the phrase “open capital won”, which is phonetically similar to “open capital one”, the command to open the Capital One banking application for voice assistants.

This type of attack will not work all the time, but it is more likely to work for non-native speakers of English who have an accent, or for environments where there is a lot of noise and the command can be misinterpreted.

In the same way, an attacker could register a malicious application that is triggered by “open capital one please” or variations in which the malicious actor adds words to the trigger.

There are videos where vocal crouching attacks are demonstrated on Amazon Alexa and Google Home devices.

Regarding the speech masking attack; we know that it is not new and has already been detailed in different investigations.

The idea of this attack is to prolong the interaction time of a running application, but without the user noticing it, information security experts commented.

The owner of the device believes that the previous application (the malicious one) has stopped working, but the application continues to listen to the commands. At the moment when the user tries to interact with another legitimate application, the malicious application responds with a false interaction.

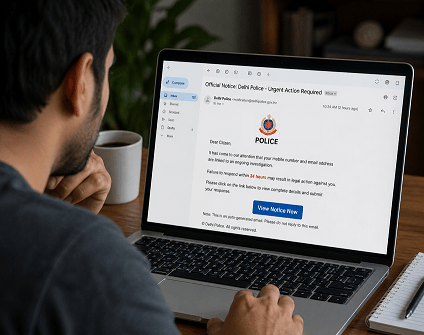

Professionals say that voice-masking attacks are ideal for phishing users.

Information security experts tell us that if you want to hear more about this attack, there are demo videos available on a dedicated website, and there is also more detail about the research in a document titled “Understanding and Mitigating the Security Risks of Voice” Controlled Third-Party Skills on Amazon Alexa and Google Home. ”

The team of experts contacted Amazon and Google with the findings of their research, and commented that both companies are investigating the vulnerabilities.

Working as a cyber security solutions architect, Alisa focuses on application and network security. Before joining us she held a cyber security researcher positions within a variety of cyber security start-ups. She also experience in different industry domains like finance, healthcare and consumer products.