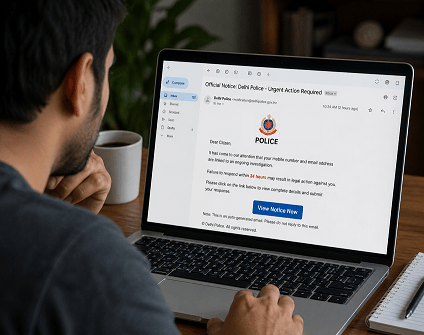

An investigation conducted by IT security audit specialists from cybersecurity firm Symantec has detected at least three cases of financial fraud involving the use of fake audio generated by artificial intelligence software, a practice known as deepfake, frequently used on adult content sites.

This kind of software can be trained using a considerable amount of audio records; in this case, the records corresponded to bank executives who never imagined that this kind of unsecured information could be used for a cyberattack.

Hugh Thompson, IT security audit specialist in charge of this investigation, says threat actors could have used any voice records at their disposal, including company-owned audiovisual material, bankers’ media statements, conferences, voice notes, among other resources to build a victim’s voice model. “To work as expected, the voice record created with artificial intelligence must be almost perfect”, he says.

According to the research, hackers even edited the audio generated by artificial intelligence, adding background noise to make it harder for digital forensics experts to detect it. “It’s a highly sophisticated scam, any cybersecurity expert could be tricked using this attack variant,” Thompson says.

Dr. Alexander Adam, IT security audit specialist at a major university, says that the development and implementation of artificial intelligence software requires enormous economic, time and intellectual resources, so threat actors behind this campaign must have significant support. “Only the process of training artificial intelligence software would cost thousands of dollars,” he added.

On the other hand, specialists from the International Cyber Security Institute (IICS) add that huge computing capabilities are required to build an artificial intelligence tool capable of mimicking a person’s voice, as well as the sources of where victims’ voice records are taken should be clear and durable enough to help the software fully detect factors such as intonation, speech rhythm and the most frequently used words of the attack target.

He is a well-known expert in mobile security and malware analysis. He studied Computer Science at NYU and started working as a cyber security analyst in 2003. He is actively working as an anti-malware expert. He also worked for security companies like Kaspersky Lab. His everyday job includes researching about new malware and cyber security incidents. Also he has deep level of knowledge in mobile security and mobile vulnerabilities.