Researchers at the University of Maryland developed a new attack variant that would force the slowdown of machine learning systems, resulting in critical server and application failures. This attack was presented at a recent cybersecurity event and is based on the interruption of optimization techniques present in neural networks.

As many will know, a neural network is a type of machine learning algorithm that requires a massive amount of memory and very powerful processors to work properly, so they are not commonly used implementations. Many of these systems must send the processed information to a cloud server for proper operation, implementing various techniques and technology to reduce resource demand to the maximum possible.

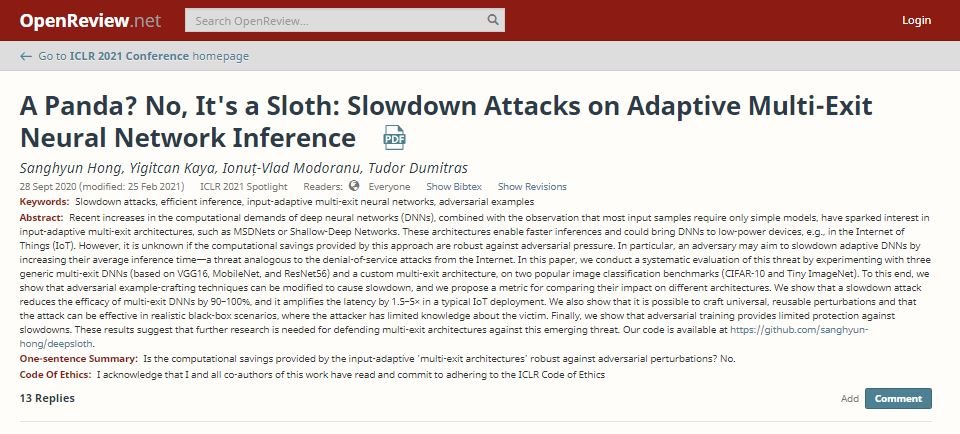

One of these optimization methods is known as multi-exit or early output architecture, which causes neural networks to stop processing information as soon as they reach an acceptable minimum level. This is a recently created way of working, although it is already well accepted, experts say.

Tudor Dumitras, responsible for this investigation, managed to develop an attack that directly points against this optimization process. Identified as DeepSloth, this attack involves imperceptible changes to the input data, preventing neural networks from performing the early exits process and forcing the application of a full calculation of this information.

The researcher says this attack eliminates the optimization of neural networks: “This kind of architecture can reach half of its regular resource consumption; using DeepSloth, it is possible to reduce the effectiveness of the early exit approach by nearly 100% similar to what would happen in a denial of service (DoS) attack.”

On the other hand, in scenarios where a multi-exit network is divided between on-premises devices and cloud deployments, an attack can force the device to send all its data to a single server, which can cause all kinds of failures in the final results expected by neural network administrators.

Dumitras concluded his presentation by asking developers to adopt new security approaches to protecting these systems: “This is only the first of many attacks on neural networks, so the community will need to take a preventive approach before it is too late.”

To learn more about information security risks, malware variants, vulnerabilities and information technologies, feel free to access the International Institute of Cyber Security (IICS) websites.

He is a well-known expert in mobile security and malware analysis. He studied Computer Science at NYU and started working as a cyber security analyst in 2003. He is actively working as an anti-malware expert. He also worked for security companies like Kaspersky Lab. His everyday job includes researching about new malware and cyber security incidents. Also he has deep level of knowledge in mobile security and mobile vulnerabilities.