Facebook, Instagram, and WhatsApp’s parent company, Meta, often shares its research with other members of the cyber defense community as well as with other professionals in the field. An expert from Meta noted that “threat actors” have been selling internet browser extensions that pretend to be able to generate AI but really include malicious software meant to infect devices. These extensions are sold as having generative AI capabilities. The expert continued by saying that it is typical practice for hackers to entice people with attention-grabbing advancements like generative AI in order to deceive them into clicking on booby-trapped web links or installing apps that steal data.

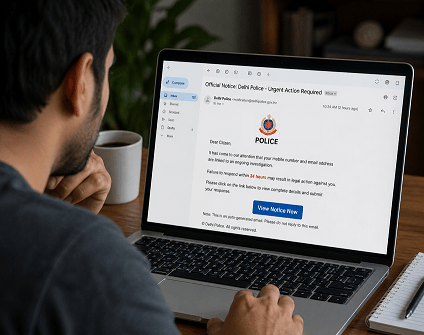

Over a thousand web addresses that appear to be promising ChatGPT-like tools but are actually traps set by hackers have been discovered and blocked by the security team at Meta. Although the company has not yet witnessed hackers using generative AI for any purpose other than as bait, He warned that preparations are being made for the inevitability that it will be used as a weapon. “Generative AI holds great promise, and bad actors know it, so we should all be very vigilant to stay safe,” he added. Security researchers working for the industry leader in social media uncovered malicious malware masquerading as ChatGPT or other artificial intelligence applications. Hackers are using attention-grabbing advancements to lure victims into their traps, where they will be tricked into clicking on booby-trapped web links or installing apps that steal data.

According to the most recent results of Guy Rosen, the company’s chief information security officer, the social media giant discovered malicious malware masquerading as ChatGPT or other similar AI tools during the previous month. . The most recent wave of malware operations has taken note of generative AI technology, which has been catching people’s imaginations and everyone’s enthusiasm, according to the researcher.

Nathaniel Gleicher, who is in charge of security policy at Meta, stated that the company’s teams are working on methods to utilize generative AI to protect itself against hackers and fraudulent internet influence efforts. “We have teams that are already thinking through how (generative AI) could be abused, and the defenses we need to put in place to counter that,” he added. “We have teams that are already thinking through how (generative AI) could be abused.” “We are getting ready for that right now.”

In recent years, a wide variety of business sectors have been increasingly adopting generative AI technology, which has applications ranging from the automation of product design to the generation of creative writing. However, as the technology becomes more widespread, hackers have started to focus their attention on it as a target. He made the analogy between the current scenario with crypto frauds, which have become more prevalent due to the widespread interest in digital money. He said, “From the perspective of a bad actor, ChatGPT is the new cryptocurrency.”

It is essential for people and organizations to maintain a high level of vigilance about possible dangers in light of the growing number of businesses that are using generative AI. They may better defend themselves against the ever-increasing risk of cyber attacks if they keep themselves apprised of the most recent advancements in the field of cybersecurity and routinely update the security measures they have in place.

Information security specialist, currently working as risk infrastructure specialist & investigator.

15 years of experience in risk and control process, security audit support, business continuity design and support, workgroup management and information security standards.